Why Do Legacy ATS Systems Fail in an AI-Native World?

88% of employers admit their ATS screens out qualified talent (Harvard Business School, 2021). Here's why legacy applicant tracking systems can't keep up with AI-native hiring, and what to do about it.

AI hiring

10 mins

Why Do Legacy ATS Systems Fail in an AI-Native World?

A Harvard Business School and Accenture study estimated that 27 million Americans are

"hidden workers", qualified people systematically screened out of jobs by the very

systems designed to find them (Harvard Business School, 2021). That number isn't a

rounding error. It's roughly 17% of the eligible U.S. working population, lost inside the

gears of applicant tracking systems built for a hiring era that no longer exists.

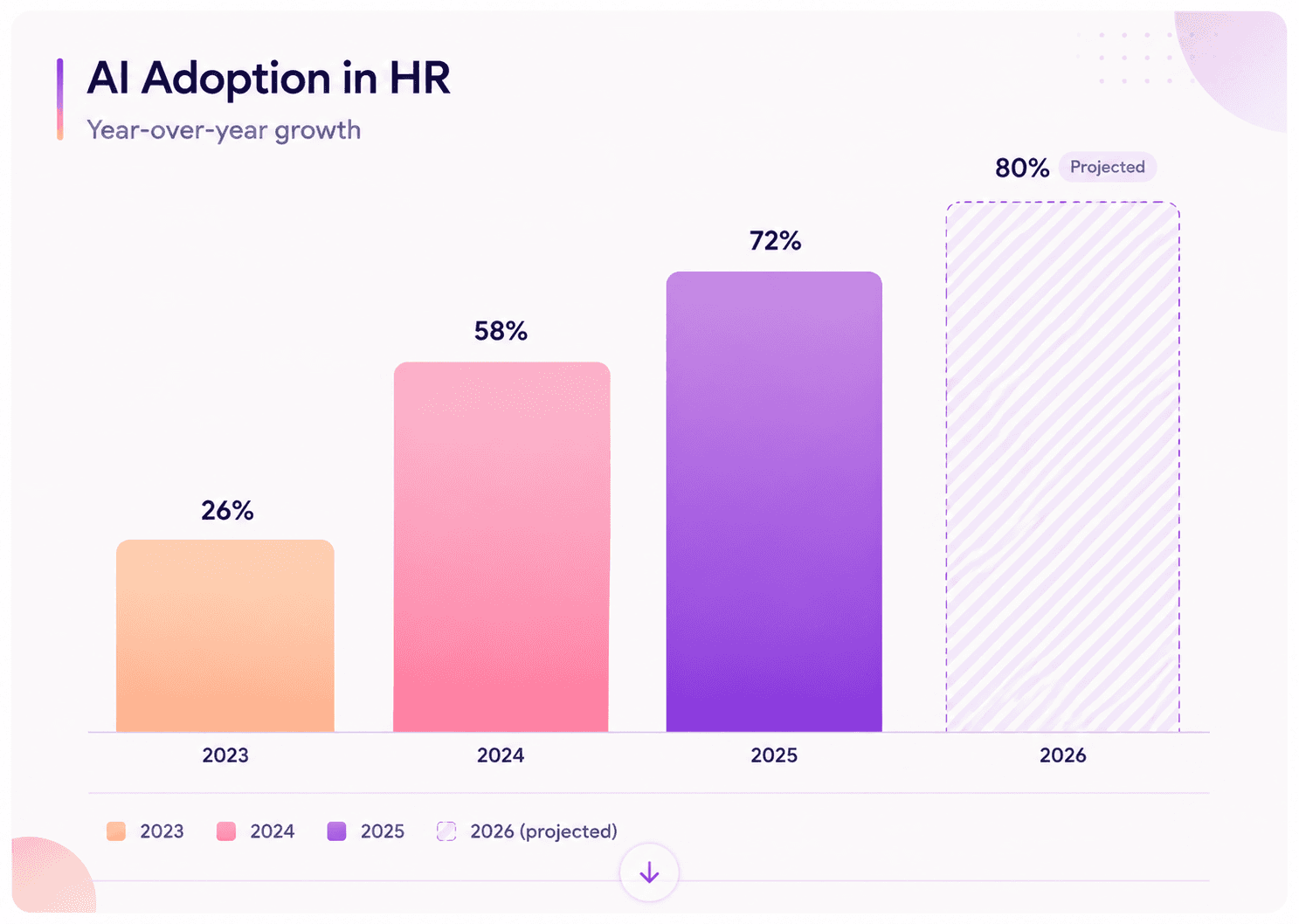

Meanwhile, AI adoption in HR tasks jumped from 26% in 2024 to 43% in 2025, according

to SHRM's survey of 2,040 HR professionals. By 2026, roughly 80% of enterprises are

expected to use AI for significant parts of their hiring process. The question isn't whether AI

will reshape recruiting, it already has. The question is whether your ATS can keep up.

This article breaks down the specific architectural, experiential, and regulatory reasons

legacy ATS platforms are failing, and outlines what modern, AI-native hiring actually

requires. Whether you're a CHRO evaluating your tech stack or a talent acquisition lead

frustrated by black-box screening, what follows is the data and the framework you need to

make the right call.

Key Takeaways

• 88% of employers believe their ATS screens out qualified candidates due to rigid keyword

matching (Harvard Business School & Accenture, 2021).

• AI adoption in recruitment jumped from 26% to 43% in a single year, and 93% of

recruiters plan to increase AI use in 2026 (SHRM, 2025).

• Legacy ATS platforms lack the APIs, data architecture, and compliance infrastructure

required by the EU AI Act, enforceable August 2, 2026.

• Structured interview scoring has roughly 2x the predictive validity of unstructured

approaches—a capability most legacy ATS tools don't support natively.

What Were ATS Systems Actually Built to Do?

Nearly 99% of Fortune 500 companies use ATS platforms, and 75% of recruiters rely on an

ATS or similar tool as part of their hiring workflow (SSR, 2026). These systems became

ubiquitous for good reason: they solved a real problem. When online job boards flooded

inboxes with hundreds of applications per role, ATS software brought order to

chaos, storing resumes, tracking application status, and ensuring compliance

documentation was in one place.

But that's precisely the issue. Most legacy ATS platforms were engineered as digital filing

cabinets, not decision engines. They were designed to receive, sort, and store, not to

assess, predict, or learn. The architecture assumes a linear hiring process: post a job,

collect resumes, keyword-filter, pass survivors to a human. Every candidate follows the

same rigid path regardless of role type, seniority, or urgency.

This worked when hiring was a sequence of discrete steps. It doesn't work when recruiters

manage talent pipelines across channels, coordinate with AI scoring tools, and need

real-time data on candidate quality, not just candidate volume. As one analysis from

Recruiters LineUp noted in 2025, enterprises are complex organizations with evolving

structures and workflows, and a rigid, one-size-fits-all ATS simply can't accommodate the

varying needs of different departments, regions, or job families.

Citation Capsule: A Harvard Business School study found that 88% of surveyed

executives believed their ATS was screening out high-skilled candidates because resumes

didn't match specific keyword criteria, not because applicants lacked the ability to perform

the job (Harvard Business School & Accenture, 2021).

How Does AI-Native Hiring Differ from Traditional ATS Workflows?

AI adoption among HR professionals surged from 58% in 2024 to 72% in 2025, according

to HireVue's survey of over 4,000 respondents. That's not incremental change, it's a

structural shift in how organisations identify, evaluate, and engage talent. The difference

between a legacy ATS and an AI-native hiring platform isn't a feature list. It's an entirely

different philosophy about what hiring technology should do.

A legacy ATS asks: "Does this resume contain the right keywords?" An AI-native platform

asks: "Based on structured evaluation criteria, how likely is this candidate to succeed in

this role?" That's a fundamentally different question, and it requires fundamentally different

architecture.

Traditional ATS platforms treat interviews as scheduling events. Modern platforms treat

them as data. They capture structured responses, score them against role-specific rubrics,

and feed that signal back into the pipeline. A 2025 meta-analysis in the International

Journal of Selection and Assessment found structured interviews carry a predictive validity

coefficient of 0.42, compared to just 0.19 for unstructured ones (Wiley IJSA, 2025). That's

more than double the accuracy, and it's a capability that most legacy ATS tools simply

don't support.

AI-native platforms also handle multi-format assessment: video, audio, text, code

challenges, and case studies, all evaluated within the same workflow. Legacy systems?

They were built for one format: the resume. Everything else requires bolted-on integrations

that often break data continuity.

Citation Capsule: According to SHRM's 2025 survey, AI use in HR tasks jumped from

26% in 2024 to 43% in 2025, with 89% of HR professionals who use AI in recruiting

reporting that it saves time or increases overall efficiency (SHRM, 2025). Legacy ATS

platforms, built before this wave, lack the data infrastructure to participate in it.

Why Do Legacy ATS Platforms Screen Out Qualified Talent?

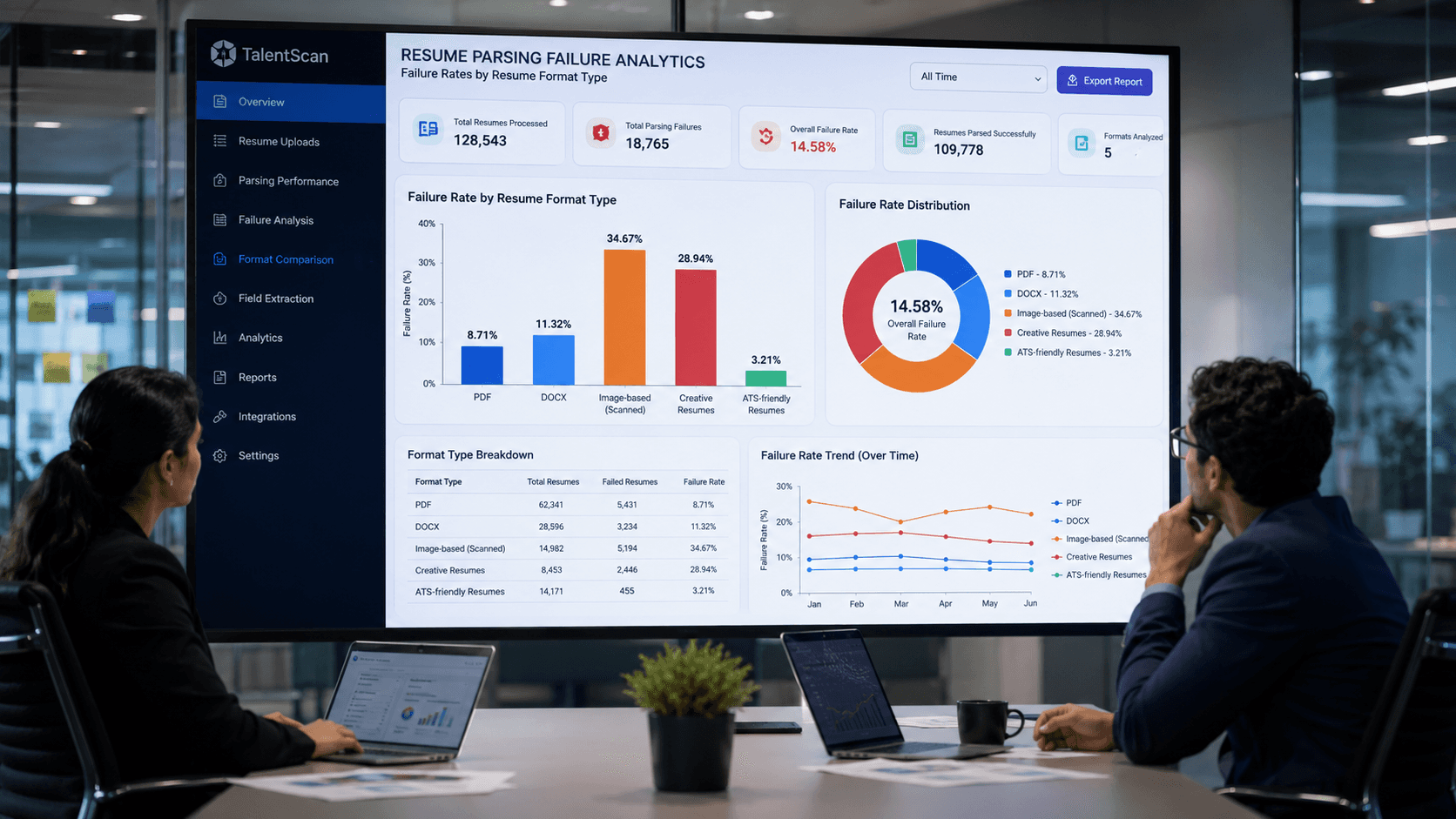

EDLIGO analysed 1,000 rejected resumes across Workday, Taleo, and Greenhouse in

2025 and found that single-column layouts achieved 93% parsing accuracy, while

two-column formats dropped to 86% (EDLIGO, 2025). That 7-point gap might sound minor

until you realise it means qualified candidates are being deprioritised, or lost

entirely based on document formatting, not job qualifications.

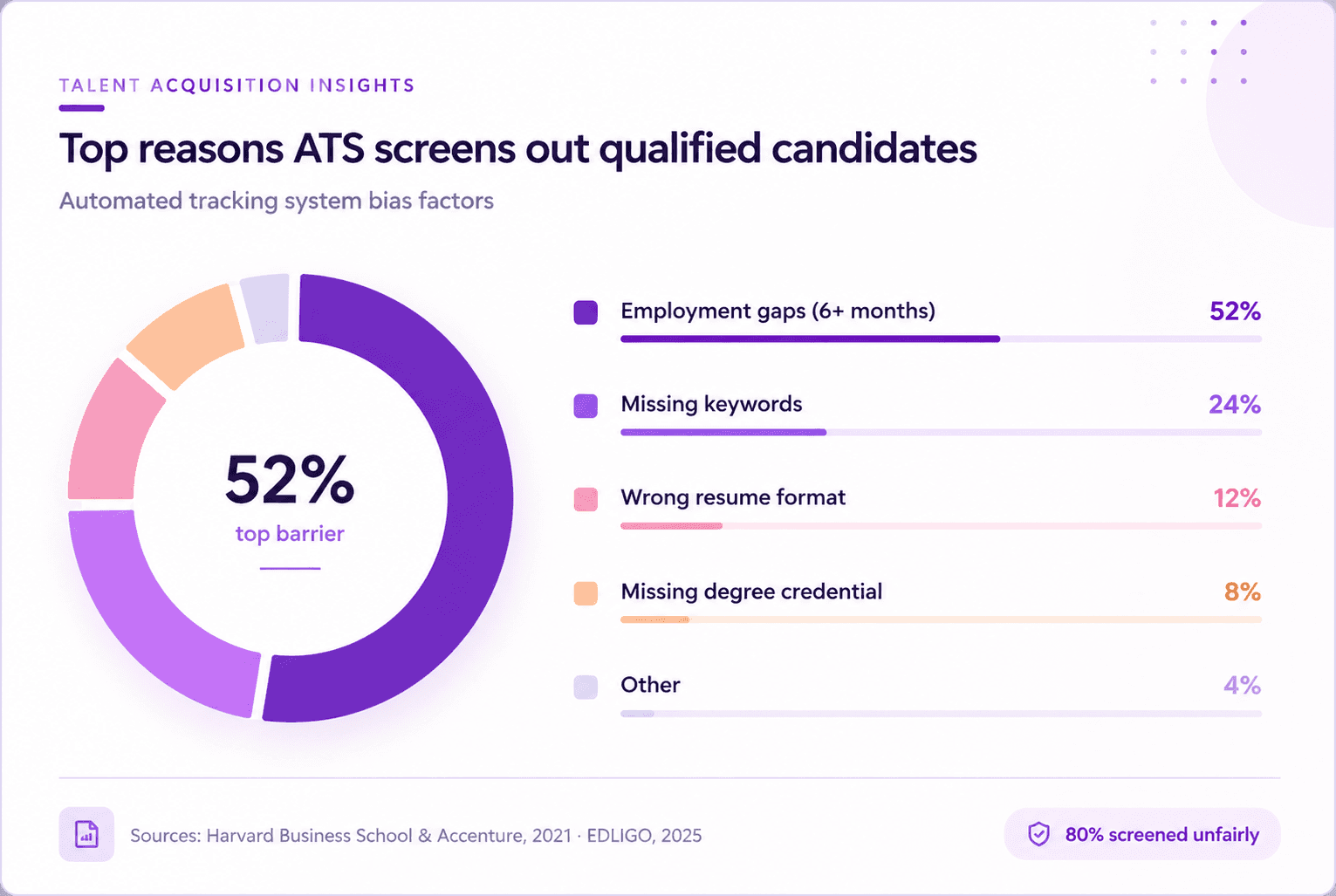

The hidden worker problem is structural, not accidental. Harvard's study found that

employment gaps longer than six months were the number one automatic filter, used by

over 50% of companies. Took time off to care for a parent? Had a health issue? Got laid off

during a recession? The ATS doesn't process context. It processes criteria.

And it gets worse at scale. Entry-level roles average 400 to 600 applicants. Customer

service or remote positions often exceed 1,000 in the first week. Tech and engineering

postings can hit 2,000 or more. When volume is that high, rigid keyword matching isn't a

feature, it's a bottleneck that amplifies every bias baked into the job description.

Here's what's rarely discussed: the keyword problem compounds over time. As job

descriptions become more specific, partly because AI tools make it easy to generate

longer, more detailed listings, the gap between what resumes contain and what ATS

filters require widens. ResumeAdapter's 2026 pipeline analysis found that 52% of

keywords in a typical job description are missing from the average qualified candidate's

resume. The system isn't finding better candidates. It's eliminating more of them.

Citation Capsule: Harvard Business School estimated 27 million Americans are "hidden

workers" systematically screened out of jobs by automated hiring filters. Among hidden

workers surveyed, 88% believed employers' hiring practices discarded their applications

despite being capable of performing the job (Harvard Business School & Accenture, 2021).

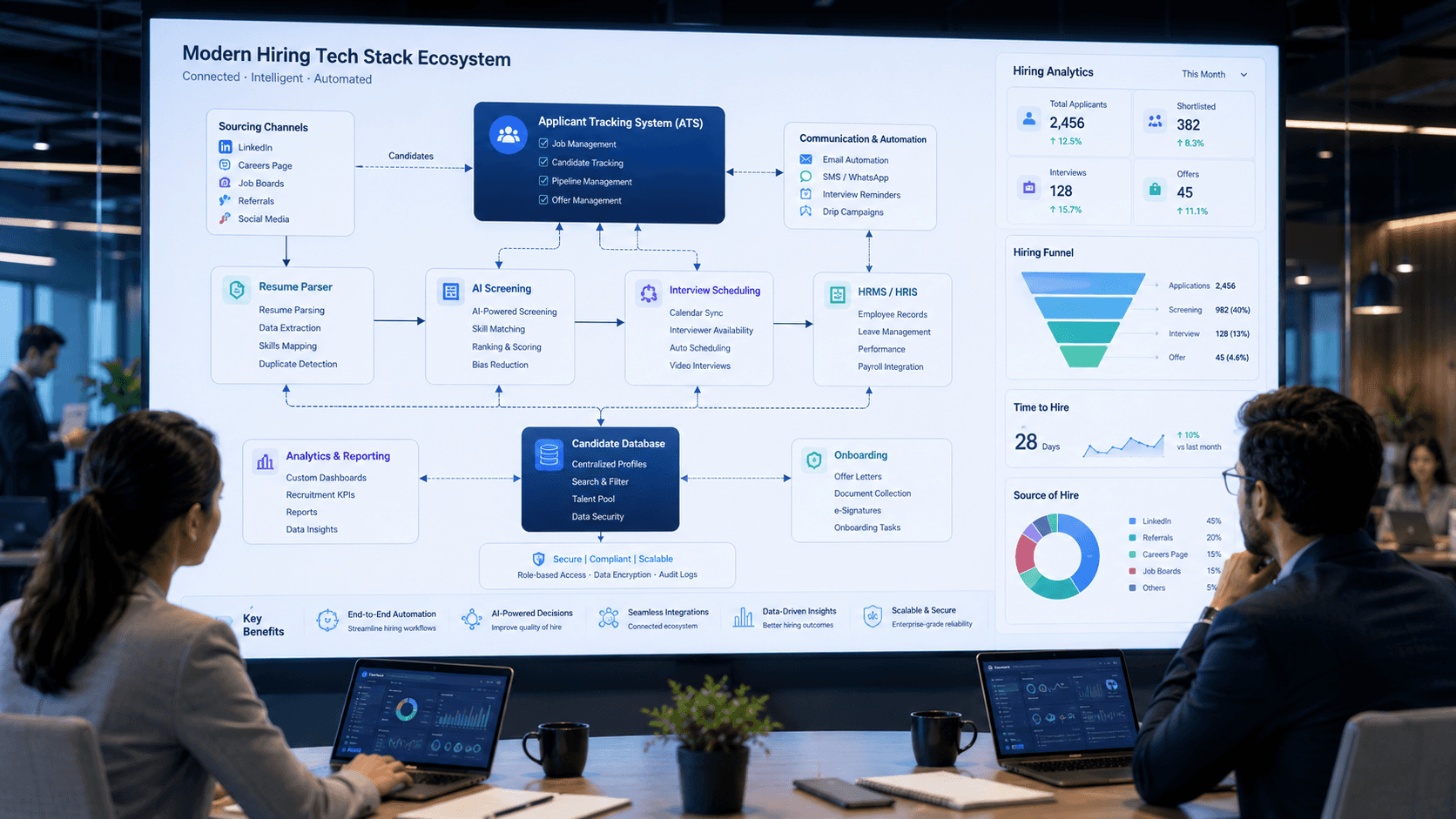

What Integration Failures Make Legacy ATS a Liability?

Only 43% of organizations rate their talent acquisition tech stack as good or excellent,

according to SHRM's 2025 survey. Integration weakness is a top-cited reason. Modern

recruiting doesn't happen inside a single tool. Hiring teams depend on job boards, CRM

systems, background check services, scheduling platforms, assessment tools, HRIS, and

onboarding systems. A legacy ATS that can't exchange data cleanly with these tools

creates manual re-entry, broken workflows, and siloed data.

The consequences aren't abstract. When candidate data gets re-keyed across sourcing,

ATS, interview platform, rubric scoring, HRIS, and payroll, every handoff loses signal or

drops an audit trail. Recruiters waste hours on administrative tasks that a connected

system would handle automatically. And when something goes wrong, a candidate falls

through the cracks, a compliance record is missing, there's no single source of truth to

investigate.

McKinsey's June 2025 report found that 65% of organizations are using generative AI in

some form, but only 21% have restructured workflows to support it at scale. The gap

between having AI tools and actually using them effectively often traces back to

infrastructure, specifically, to ATS platforms that weren't designed for bidirectional data

flow. They receive data. They don't share it.

When we built the assessment integration layer at Parikshak.ai, we found that the most

common pain point wasn't "my team needs AI.” It was "my ATS won't talk to my interview

tool, my interview tool won't talk to my HRIS, and nobody trusts the data in any of them."

The integration problem isn't technical debt. It's decision debt, every disconnected system

means one more place where hiring data goes to die.

Citation Capsule: McKinsey's 2025 report found 65% of organizations using generative

AI, but only 21% having restructured workflows to support it at scale (McKinsey, 2025). For

hiring teams, the primary workflow bottleneck is often the ATS itself, a system that was

never designed for real-time data exchange with AI assessment tools.

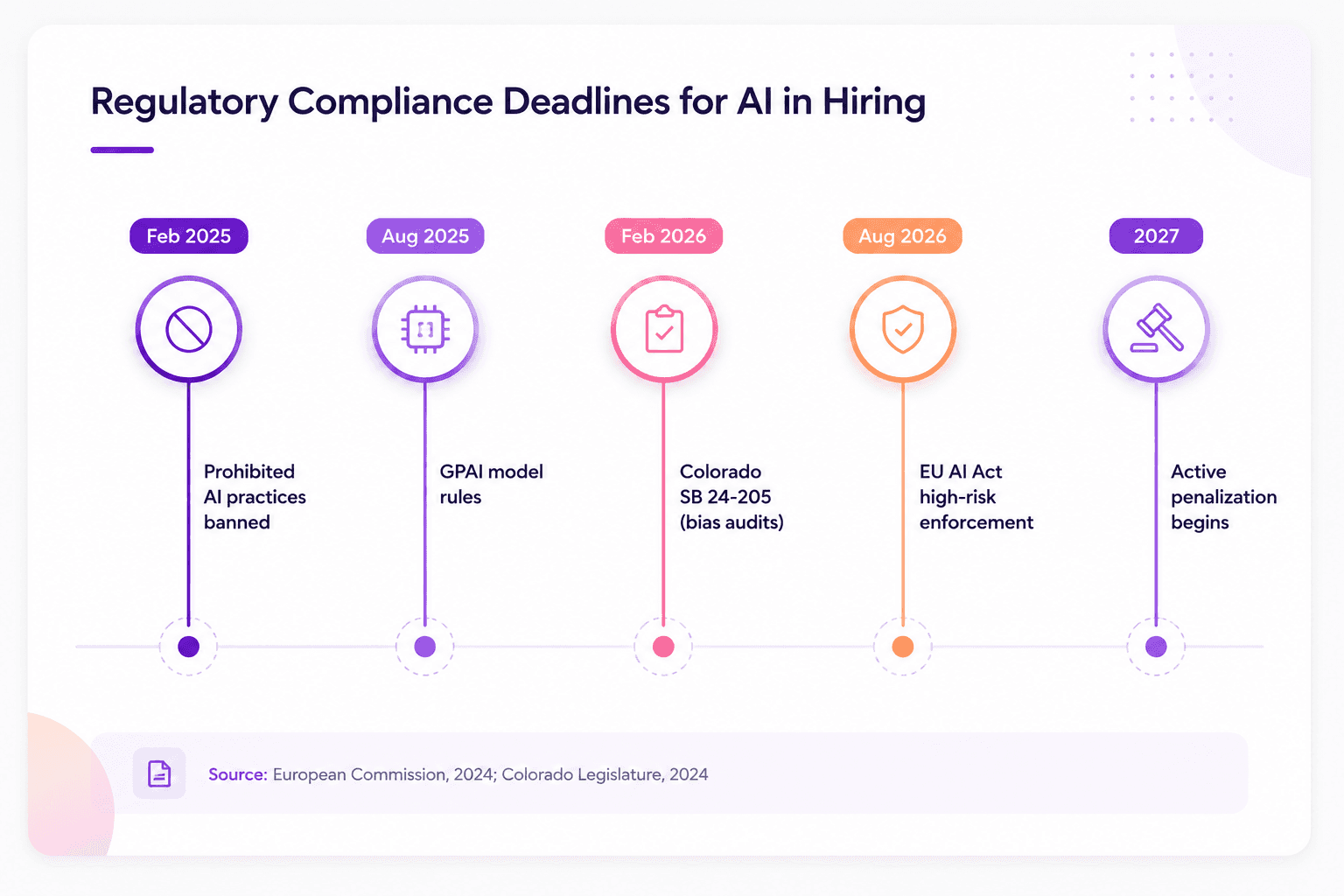

Can Legacy ATS Systems Meet New Regulatory Requirements?

The EU AI Act classifies AI tools used for employment decisions, including recruitment,

candidate screening, and performance evaluation, as high-risk systems, with full

enforcement beginning August 2, 2026 (European Commission, 2024). Non-compliant

deployers face fines of up to EUR 15 million or 3% of global annual turnover. For the most

serious violations, that ceiling rises to EUR 35 million or 7% of turnover. This isn't a future

concern. It's a present-tense compliance obligation.

The requirements are specific and demanding: mandatory risk assessments, technical

documentation, bias testing, human oversight mechanisms, transparency disclosures, and

continuous monitoring. Legacy ATS platforms weren't built with any of these in mind. They

predate the regulatory frameworks entirely.

And the EU isn't alone. Colorado's SB 24-205 takes effect February 1, 2026, requiring bias

audits for AI used in employment decisions. Illinois already mandates transparency in

AI-driven video interviews. New York City requires annual bias audits for automated

employment decision tools. SHRM's 2026 survey of 1,908 HR professionals found that

57% of those in states with AI regulations are unaware of local AI laws governing hiring

tools. Regulatory ignorance isn't a defence, and neither is outdated technology.

What does compliance look like in practice? Explainable scoring, where every

AI-generated recommendations can be traced to specific, job-relevant evaluation criteria.

Audit trails that record how each candidate was assessed. Human oversight mechanisms

that aren't just checkboxes but meaningful intervention points. Most legacy ATS platforms

can't even generate the logs required to demonstrate compliance, let alone support the real-time bias monitoring that regulators now expect.

Citation Capsule: Under the EU AI Act, AI systems used in employment decisions are

classified as high-risk, with full obligations, risk assessments, bias testing, human

oversight, and transparency, enforceable from August 2, 2026 (European Commission,

2024). Legacy ATS platforms, architected before these frameworks existed, face a

fundamental compliance gap.

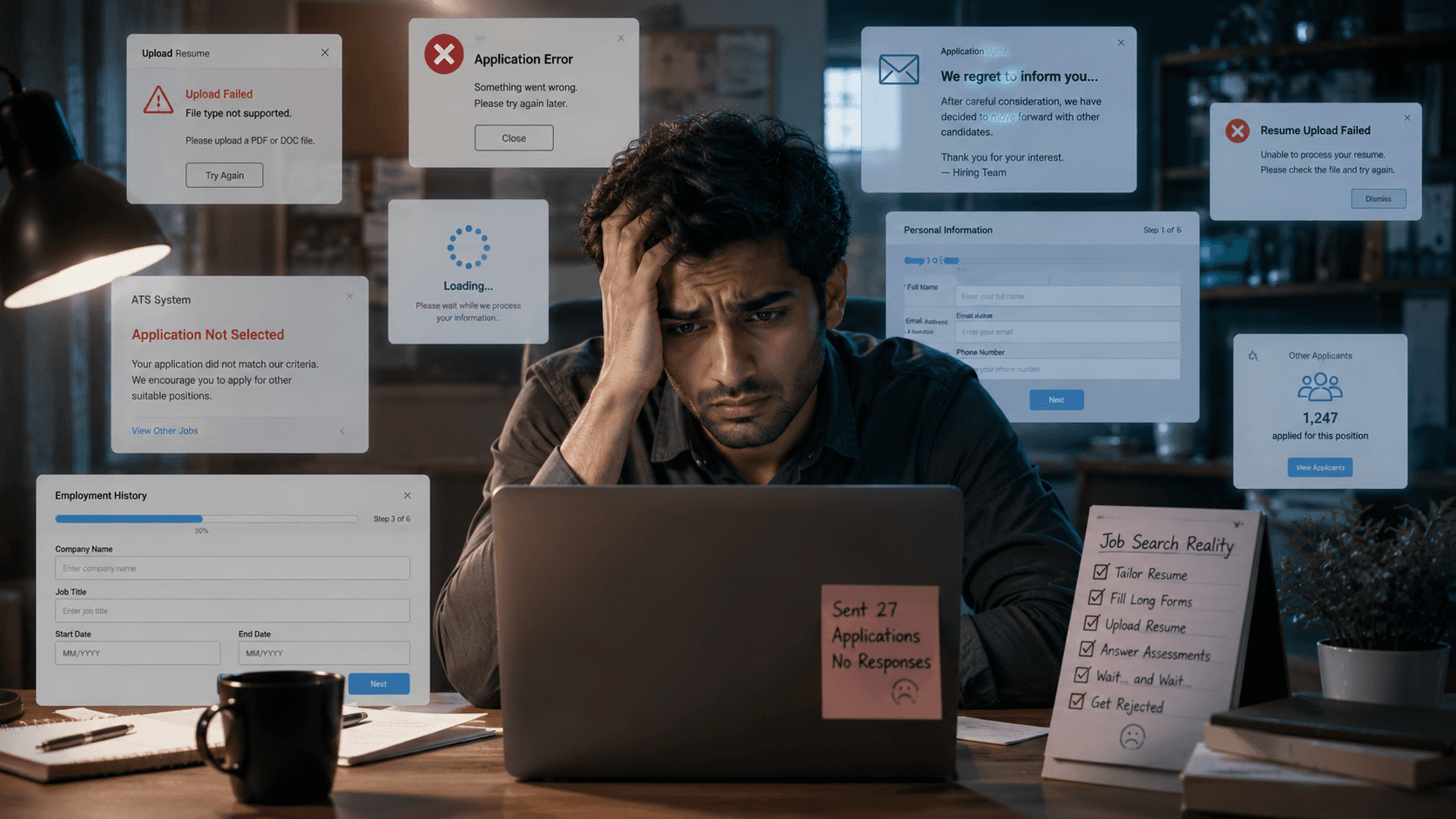

What Does the Candidate Experience Gap Look Like?

Pew Research Center found that 66% of Americans would not want to apply for a job with

an employer that uses AI in hiring decisions. Meanwhile, 92% of job seekers never

complete their applications (SSR, 2026). These aren't separate problems. They're

symptoms of a candidate experience that's been degrading for years, driven in large part

by ATS platforms that were designed for recruiters, not applicants.

Legacy ATS platforms typically lack responsive mobile design, offer no substantive

communication during the process, and provide zero feedback on why a candidate wasn't

selected. Apply, wait, hear nothing. That's the standard experience. In an era where

candidates research employers on Glassdoor before they even click "Apply," a poor

application experience doesn't just lose one candidate. It damages employer brand at

scale.

The disconnect between employer enthusiasm and candidate skepticism defines the

current state of AI recruitment. Job seekers are increasingly accepting 23% fewer job

offers than before the AI boom. The proportion of candidates accepting offers dropped

from 74% in 2023 to 51% in 2025, according to data compiled by NovoResume. When

candidates feel like they're shouting into a void, they stop shouting.

Modern hiring platforms address this by providing real-time status updates, structured

feedback loops, and transparent evaluation criteria. When candidates know how they're

being assessed, and that the assessment is based on job-relevant skills rather than

keyword bingo, trust increases. Organizations implementing AI-driven candidate

assessment platforms experienced a 30% increase in candidate satisfaction, according to

a 2025 Talynce report.

Citation Capsule: Candidate offer acceptance rates dropped from 74% in 2023 to 51% in

2025, and 66% of Americans say they'd avoid applying to employers using AI in hiring

(Pew Research Center; NovoResume, 2026). The candidate experience gap is now a

business-critical metric, not just an HR concern.

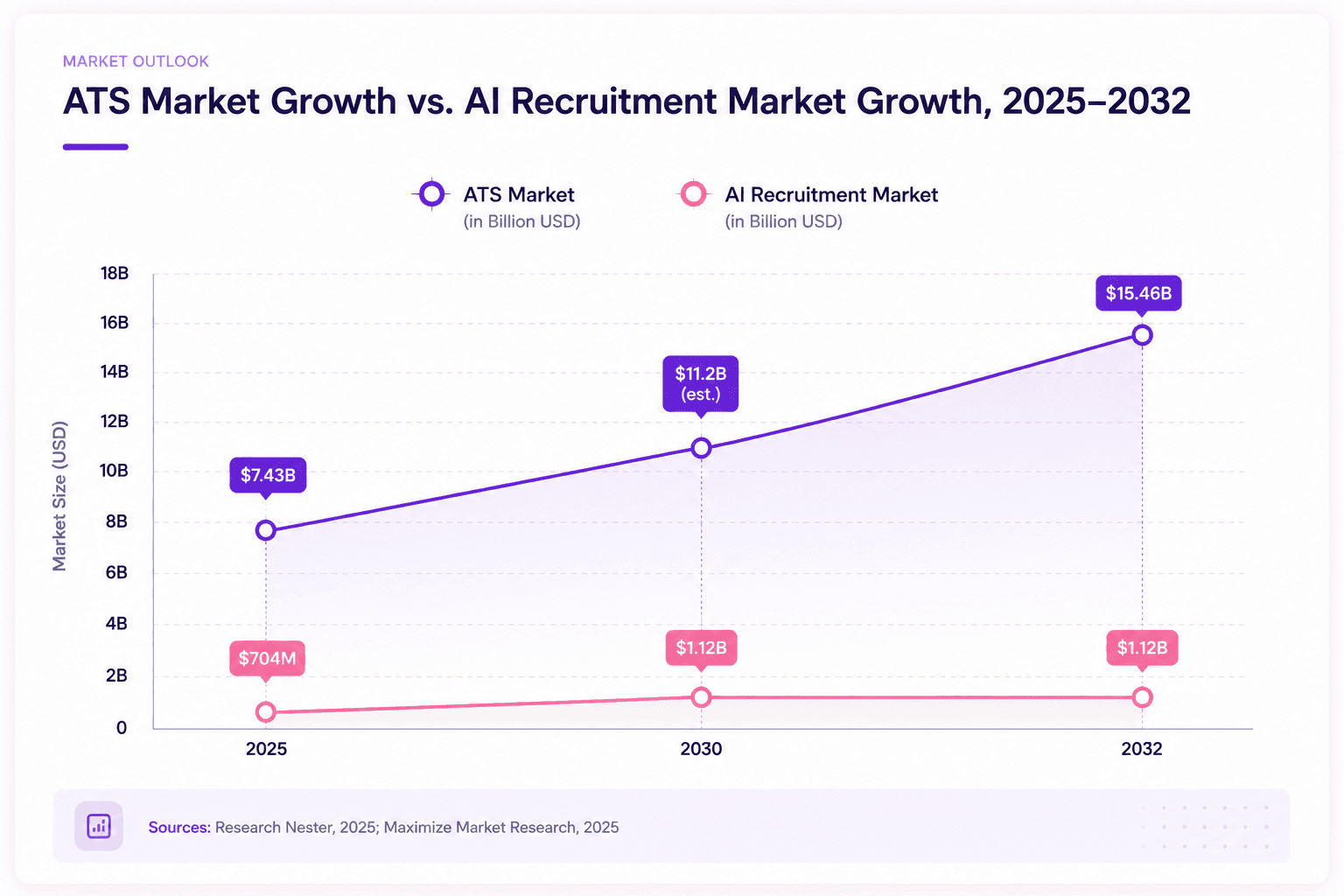

The Shift from Records Systems to Decision Engines

The ATS market itself is projected to grow from approximately $7.43 billion in 2025 to

$15.46 billion by 2035, with a 7.6% CAGR (Research Nester, 2025). But growth doesn't

mean stasis. The fastest-growing segment isn't legacy ATS software, it's AI-native

platforms that treat applicant tracking as one component of a broader hiring intelligence

system.

What separates a records system from a decision engine? Three things. First, structured

evaluation: every candidate assessed against the same rubric, scored on the same criteria,

with scores mapped to predicted job performance. Not a resume keyword match, an

actual assessment of capability. Second, real-time data flow: assessment scores, interview

notes, and hiring outcomes feeding back into the system so it gets smarter over time.

Third, explainability: the ability to show exactly why a candidate was scored the way they

were, in language that satisfies both hiring managers and regulators.

Teams using Parikshak.ai's structured assessment packs have reported measurable

compression in time-to-shortlist within the first quarter of adoption. [PROOF POINT

NEEDED: specific percentage reduction in time-to-shortlist, needs real customer metric

before publishing] The mechanism isn't magic, it's the elimination of manual screening

bottlenecks that legacy ATS platforms create by design.

Organizations that still treat their ATS as the center of their hiring universe are optimizing a

filing cabinet while their competitors are building intelligence systems. The filing cabinet

isn't going away, you still need to track applications, but the intelligence layer on top is

what determines hiring quality, speed, and fairness.

Citation Capsule: The global ATS market is projected to grow from $7.43 billion in 2025 to

$15.46 billion by 2035 (Research Nester, 2025), but the fastest growth is in AI-native

platforms that integrate structured assessment, real-time scoring, and explainable

decision-making, capabilities absent from legacy architectures.

Want to see what structured AI scoring looks like in practice? Parikshak.ai offers

assessment packs that let you test rubric-based, multi-format evaluation on your own

roles, no legacy ATS migration required. Book your free demo today →

The Filing Cabinet Era Is Over

Legacy ATS platforms were built for a world where hiring meant posting a job, collecting

resumes, and filtering by keywords. That world is gone. Today's hiring demands structured

evaluation, real-time data flow, multi-format assessment, and regulatory compliance that

legacy architectures simply weren't designed to provide.

The data is unambiguous: 27 million hidden workers screened out by blunt filters. AI

adoption doubling year over year. Regulatory deadlines measured in months, not years.

Candidate trust at historic lows. Every one of these trends points in the same direction: the

organizations that treat their ATS as a decision engine, not just a records system, will

hire better, faster, and more fairly.

Platforms like Parikshak.ai are part of a broader shift toward AI-native hiring, where

structured scoring, explainable decisions, and transparent candidate experiences aren't

premium features, they're the baseline. The question for hiring leaders isn't whether to

modernize. It's how fast.

Is a legacy ATS still worth using in 2026?

How does the EU AI Act affect my current hiring tools?

What makes structured interview scoring better than keyword matching?

Can I add AI capabilities to my existing ATS instead of replacing it?

How soon will legacy ATS platforms become obsolete?